We’d all love to have NVIDIA’s RTX 4090, but the card’s prohibitive price tag of $1,599 (if you can even find it for that price) and its huge power consumption make it hard to justify. There is only one other new NVIDIA choice for anxious PC gamers this year, and it costs $1,199 for 16 GB of video memory (VRAM).

Some gamers may be enticed by the $400 price tag, albeit it isn’t exactly a massive decrease in the world of high-end PC gaming (definitely not as significant as the $899 12GB RTX 4080 that NVIDIA “Un launched.”).

After all, it supports NVIDIA‘s impressive DLSS 3 upscaling technology and is quicker than the RTX 3080 Ti that came out earlier this year at the same price (which is limited to 4000-series GPUs). The RTX 4080 is a potent GPU that will please anyone who wants to game at 4K with ray tracing if they can go without the bragging rights of having a 4090.

However, individuals who are limited to lower-resolution displays would be wise to hold off until the release of AMD’s RDNA 3 GPUs and the 4070/4060 cards. The AD103 silicon used in the GeForce RTX 4080 is 378.6 mm2 in size, which is 38% smaller than the AD102 silicon used in the RTX 4090 and results in 40% fewer transistors.

However, compared to the previous generation’s 3090 Ti flagship, the 4080 still has 62% more transistors. Given these numbers, it’s not surprising that the 4080 has 41% fewer CUDA cores than the 4090, a 33% narrower memory bus, and 11% less L2 cache. The RTX 4090 and 4080 are nearly identical in terms of their core clocks, coming in at 2505 MHz and 2520 MHz, respectively, with the 4090 having a little advantage.

Read More: Apple’s SOS-Via-Satellite Emergency Service Starts in The Us And Canada!

The RTX 4080’s 22.4 Gbps GDDR6X memory is 7% faster than that of the series’ flagship, although the card’s overall memory bandwidth is 29% lower, at 717 GB/s, due to a narrower 256-bit memory bus. According to Nvidia, the RTX 4080 consumes a maximum of 320 watts of electricity when rendering graphics. That’s the same score as the 3080, albeit with a slightly lower maximum GPU temperature (from 93c to 90c).

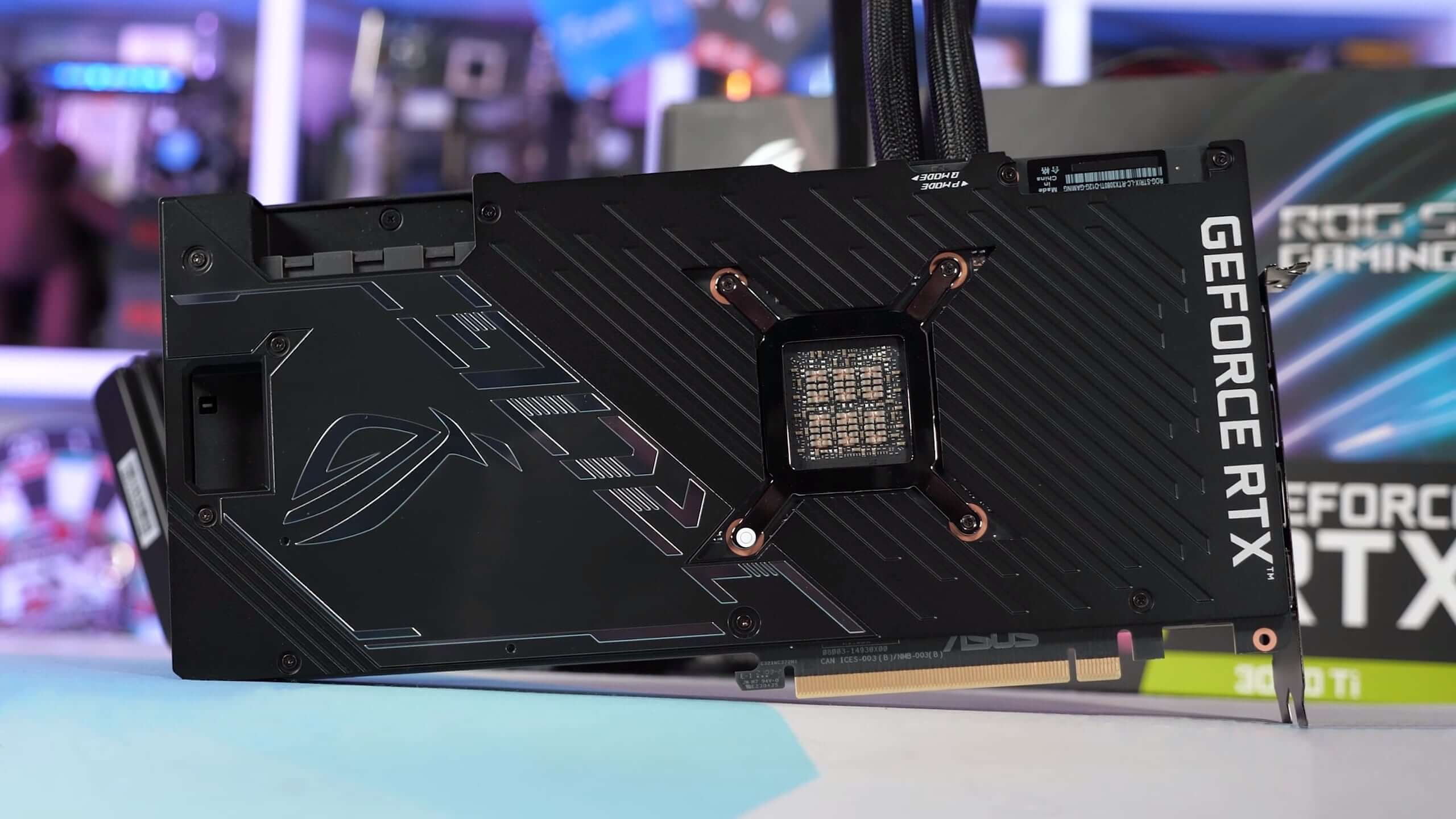

We’ll be utilizing an 850w machine for testing, however, the minimum power supply is 750w. As far as we can tell, the only external difference between the Founders Edition RTX 4080 and the standard RTX 4090 is that the former is 3% lighter. We speculate that the GPU device is smaller, that power delivery has been reduced, and that the memory configuration has been altered.

We didn’t have time to disassemble it for this evaluation, so we can’t say whether or not Nvidia made any changes to the cooling. The important thing to note is that they look identical. Because of this, the GeForce RTX 4080 is still powered via the divisive 16-pin 12VHPWR cable.

This link has been the subject of much speculation over the past month, but the truth behind it remains, in our judgment, unclear. Since we think a lot more research is needed before making any solid conclusions, we won’t be adding any more fuel to that fire.

Read More: Xbox Boss Phil Spencer Targets For $99-$129 Price Point With Game Streaming Device!

Previously, four 8-pin power connectors were needed to supply the same amount of power as a single PCIe 5.0 power connector. To save on purchasing a new PCIe 5.0 compliant power supply, the RTX 4080 features a 3x 8-pin to single 16-pin converter, just like the one included with the 3090 Ti.

The GeForce 40 series adds DLSS 3, which is now only available on the new series, in addition to the increased number of cores, the inclusion of 4th-gen Tensor cores, and the introduction of 3rd-gen RT cores. Aside from the release of a few more titles that are compatible with Nvidia’s DLSS 3 technology, not much has changed since we tried it.

Though it was conducted after the RTX 4090’s initial release, our analysis is still the most thorough explanation of the technology available. Our benchmarks show that the RTX 4080 performs similarly to other cards when using DLSS 3. Nvidia has acknowledged our concerns and is implementing several adjustments, including those for malfunctioning UI components and transitions.

Our overall impressions of DLSS 3 are that it is an improvement over DLSS 2, but with fewer applications. When running games at a good frame rate (about 100 FPS before enabling DLSS 3 frame generation), players will see the maximum benefit from DLSS 3’s Frame Generation technology, which will increase the frame rate to roughly 180-200 FPS.

This works very well on high refresh rate screens, where visual artifacts are hidden and latency issues are reduced, and increases the overall visual smoothness. While it’s a welcome addition to Nvidia’s already robust lineup of features, we wouldn’t recommend it for games with frame rates under 120 fps.

While we intend to return to DLSS 3 benchmarks after Nvidia fixes the vulnerabilities identified in our analysis, we decided against including them in this assessment. Since 120 fps utilizing DLSS 3 frame creation isn’t actually 120 fps, but rather the smoothness of 120 fps with the input of around half that frame rate, plus a few graphical flaws, this is a major problem with DLSS 3 benchmarks.

Because of this, we recommend that anyone conducting benchmarks with frame generation turned on report their findings as “120 DLSS 3.0 fps,” for instance. All GPUs were tested at their base clock speeds, as recommended by the manufacturer, without any overclocking. The MSI MPG X570S Carbon Max Wi-Fi motherboard utilizes a Ryzen 7 5800X3D processor with 32GB of dual-rank, dual-channel DDR4-3200 CL14 memory.

/cdn.vox-cdn.com/uploads/chorus_asset/file/24195455/226406_Nvidia_RTX_4080_Review_TWarren_0001.jpg)